The Clock is Ticking: Why August 2026 is a Hard Deadline

The EU AI Act entered into force on August 1, 2024, but its most critical provisions will take full effect in August 2026. From that point, companies using AI in the EU will face binding obligations, enforceable fines, and potential operational disruption for non-compliance.

This is not a soft rollout. It’s a hard regulatory deadline.

For senior leaders, the implication is simple: preparation must start now, if you hadn’t already. The Act introduces mandatory conditions for operating in the EU market, with fines reaching up to 7% of global revenue or €35 million. Beyond penalties, the risks include reputational damage, employee distrust, and interruptions to core HR operations.

This article breaks down what the Act requires, which HR use cases are most exposed, and what actions are necessary now to ensure compliance before 2026.

Understanding the EU AI Act: A Risk-Based Framework

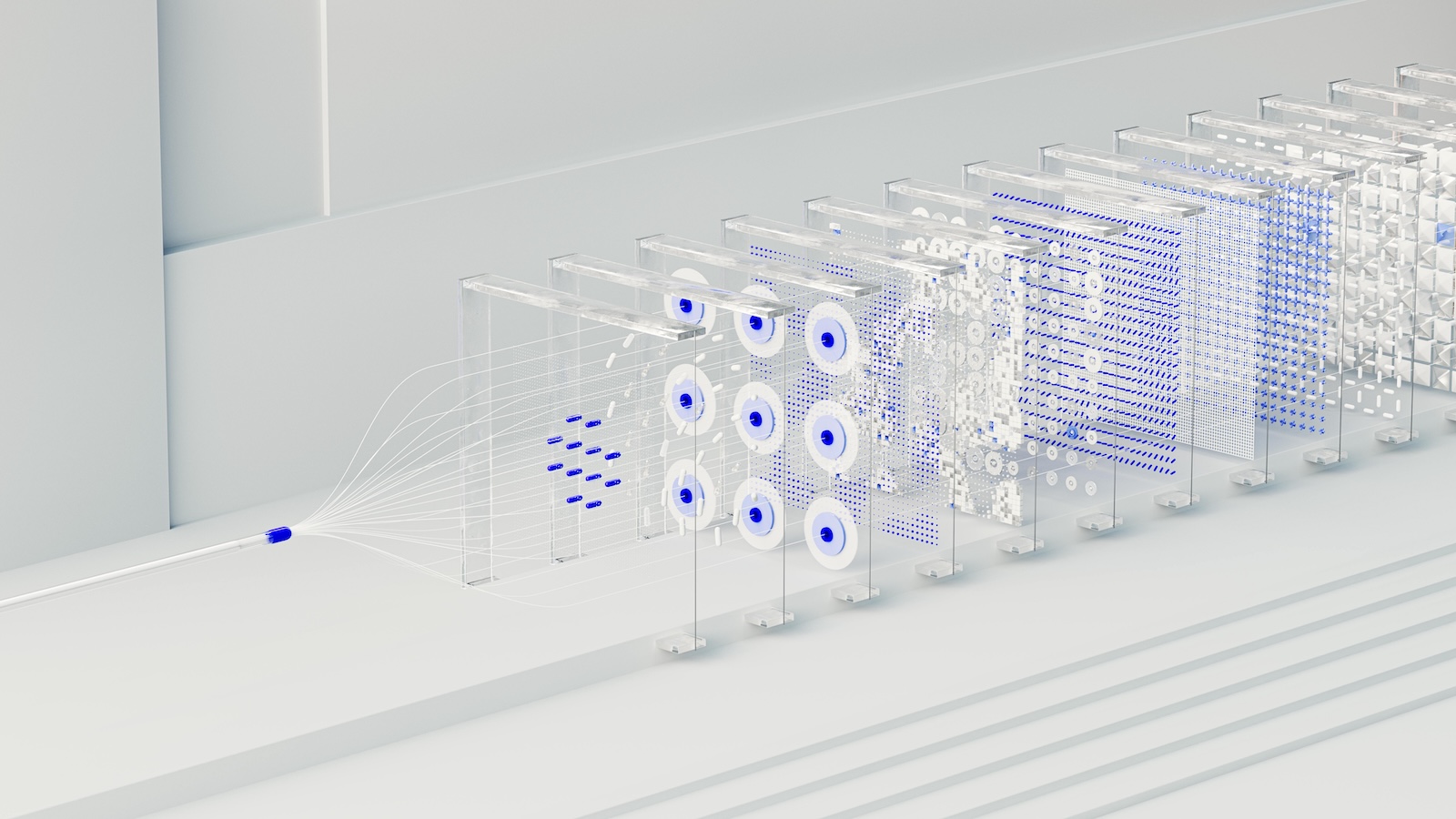

The EU AI Act introduces a tiered system that classifies AI based on risk:

Unacceptable Risk: Banned systems (e.g. manipulation, social scoring)

High Risk: AI impacting fundamental rights (most relevant for HR)

Limited Risk: Transparency-required systems (e.g. chatbots)

Minimal Risk: Low-impact tools with minimal obligations

For HR and compliance teams, the focus is on high-risk systems, including:

Recruitment and hiring tools

Performance evaluation systems

Workforce monitoring and management

Promotion, disciplinary, and termination decisions

These systems must meet strict requirements:

Continuous risk management

Strong data governance and bias mitigation

Clear transparency to employees

Human oversight and intervention capability

Ongoing monitoring after deployment

Audit-ready documentation

Why HR and Compliance Teams should not wait

The EU AI Act overlaps heavily with GDPR and existing employment laws, creating layered obligations around privacy, fairness, and employee rights.

Non-compliance won’t just result in fines—it can force companies to shut down AI systems mid-operation. Regulators are expected to actively audit and enforce, making reactive approaches risky.

AI governance is no longer optional. It’s becoming a core operational requirement.

Key AI Use Cases in HR: What’s Most Exposed?

The highest-risk areas typically include:

Recruitment: resume screening, interview analysis, predictive hiring

Performance: scoring systems, sentiment analysis, attrition prediction

Monitoring: productivity tracking, behavioral analytics

Employment decisions: promotions, compensation, terminations

For each of these, organizations must ensure:

Clear documentation of system design and purpose

Transparency with employees

Human review mechanisms

Continuous monitoring for bias and unintended outcomes

Extraterritorial Reach: Who’s Affected?

The Act applies to any company using AI in the EU, regardless of where the company is based or where the AI is developed.

This includes:

Multinational companies with EU employees

Global vendors selling AI tools into the EU

Remote teams operating within EU jurisdictions

A fragmented, region-specific approach won’t work. AI governance must be global.

Immediate Steps to Prepare for August 2026

1. Inventory AI Systems

Identify all AI tools used across HR and workforce decisions, including third-party and shadow AI. Classify them by risk level and document potential issues.

2. Build a Governance Structure

Create a cross-functional team across HR, legal, IT, and compliance to oversee AI usage, approvals, and monitoring.

3. Update Policies

Align internal policies with the Act’s requirements, covering risk management, transparency, oversight, and monitoring.

4. Implement Human Oversight

Ensure humans can review, intervene, and override AI decisions—especially in high-impact scenarios.

5. Improve Transparency

Clearly communicate where and how AI is used, and ensure employees understand their rights.

6. Monitor Continuously

Track system performance, bias, and unintended effects post-deployment. Establish reporting mechanisms.

7. Prepare for Audits

Maintain documentation and run internal audits to identify gaps before regulators do.

The Cost of Inaction

Failing to prepare carries serious consequences:

Financial penalties up to 7% of global revenue or €35M

Forced shutdown of non-compliant AI systems

Reputational damage and loss of employee trust

Increased legal exposure under GDPR and employment law

Competitive disadvantage against compliant organizations

Turning Compliance into a Strategic Advantage

The EU AI Act is not just a regulatory burden—it’s an opportunity.

Organizations that proactively implement transparent, accountable AI systems can build stronger employee trust, reduce risk, and differentiate themselves in a market where responsible AI is becoming a baseline expectation.